This website uses cookies so that we can provide you with the best user experience possible. Cookie information is stored in your browser and performs functions such as recognising you when you return to our website and helping our team to understand which sections of the website you find most interesting and useful.

AI beyond HRMS. Meet DelveAnt, our AI-native CRM for modern sales teams. Get Early Access for FREE

Found 1094 results.

- Oracle HCM AI Career Coach: Transforming Employee Growth

- Oracle Compensation 26A: Beyond Worksheets in the Era of Agentic AI

- How to Control DFF Visibility Using Visual Builder Studio in Oracle Fusion HCM

- HEX 2.0 – Extracts to Enhanced Version Migration of Specific Extracts to Enhanced Version – A Technical Architect Perspective

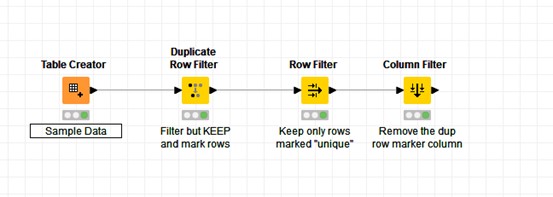

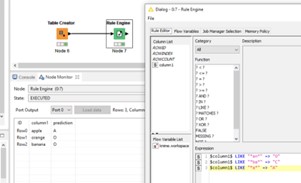

- How KNIME Can Automate Data Migration and Validation in Oracle HCM Cloud

- How Oracle Enterprise Data Management (EDM) Eases Data Management in HR Systems

- Manage Own Document Records with AI Assistance in Oracle HCM

- Configure Date of Birth Validation in VBCS

- Home with Ask Oracle: Enhancing Navigation in Oracle Fusion Applications

- Streamlining Approval Rule Migration in Oracle Fusion HCM with SOA Composer

- AI Agents Don’t Fail – Prompts Do – Design, Pitfalls, and Best Practices of Prompts

- How the PeopleSoft HRMS System Supports Modern HR Operations

- How to Set Up a Global Payroll System: Step-by-Step Guide

- Kovaion Secures Oracle PeopleSoft Innovation Partner Badge for the 4th Consecutive Year in 2026

- Learning and Development Agents: Enabling Intelligent Learning Automation in Oracle Learning Cloud

- Field Visibility and Requirement Changes in Oracle HCM: HCM Experience Design Studio vs Edit Pages in VBCS

- Innovative Hiring: QR Codes in Talent Acquisition

- Enhancing Security with Location-Based Access Control

- PeopleSoft FSCM Update Image 55: Sectionalized Landing Page

- Women’s Day Celebration 2026

- Valentine’s Day Celebration 2026

- Harvest Festival Celebration 2026

- What’s New in Oracle HCM Cloud 26B: A Complete Guide for HR Teams

- PeopleTools 8.62 – Query Administration and Partial Data Masking Enhancements

- Enhancing Data Accuracy in US Federal HCM: Position Effective Sequence for PAR (PUM Image 54)

- Newsletter – Mar 2026

- Oracle Fusion Agentic Applications: A New Way to Work

- Senior AI ML Agents Developer

- Webinar on PeopleSoft FSCM Update Image 55: Sectionalized Landing Page

- Enhanced AI-Generated Issuing Comments for Document Records and Improved Descriptions for Document Types

- How to Create a Branch in Redwood Using Visual Builder Studio (VBS): Step-by-Step Guide

- Sales Executive

- Enhancing Oracle Learning Cloud with an AI-Driven Agent Using AI Agent Studio

- How to Use Generative AI to Create Journey Templates in Oracle HCM

- AI-Powered Journeys Assistant in Oracle HCM 26A: Features, Setup, and Business Benefits

- Kovaion Drives Oracle PeopleSoft Innovation Badge Wins for Federal Bank and Thapar Institute in 2026

- How to Add Succession Management as a Subscriber to Content Section in Oracle Fusion HCM Redwood

- Newsletter – Feb 2026

- Webinar on Oracle HCM Cloud | Manager & Worker Concierge – AI Agents

- Digital Marketing Executive

- How to Integrate Microsoft Teams with Oracle Learning Cloud for Better Employee Learning

- Oracle HCM 26A Update: How Jobs Assistant Transforms Job Creation with AI

- WordPress Developer

- Front Desk and Compliance Executive

- Webinar: The Next-Gen Oracle Foundation on OCI | Retain the reliability you need, gain the agility you want

- Oracle APEX Services

- Content Writer

- Newsletter – Jan 2026

- Oracle AI Enablement Services

- Top 8 HCM Performance Management Features for Workforce Excellence

- 7 Ways Cloud ERP Solves the Biggest Legacy ERP Problems

- Senior Oracle APEX Developer

- Intelligent HR and Payroll for the Next-Gen Workforce

- event-mumbai-2026

- Creative Product Designer

- Christmas Celebration 2025 | Kovaion

- International Men’s Day 2025 | Kovaion

- Top 10 Reasons Customers Choose Oracle Fusion Cloud HCM

- Newsletter – Dec 2025

- PeopleSoft HCM PUM 53 – Embedded Insight on Remote Worker Approval Request Page

- Awards and Accreditations

- HCM PUM 53 – Notification Publisher Enhancements

- Senior Manager – HR & Recruitment

- Kovaion Fortifies Defenses: Achieving Cybersecurity Certification to Elevate Trust and Threat Protection

- Motion Graphics & Visual Designer

- Step-by-Step Guide to a Successful Oracle Fusion HCM Implementation 2025

- Head of Operations & Transformation

- Oracle HCM Cloud Time and Labor Implementation Guide

- Streamlining ILT Attendance in Oracle Learning with HSDL and Redwood Experience

- Streamline Global Compensation with Oracle Redwood Salary Switcher

- Streamlining Employee Journeys and Tasks with Generative AI in Oracle Journeys

- Newsletter – Nov 2025

- How to Configure Phone Number Validation in VBCS

- Workforce Compensation in Oracle Cloud HCM – Comprehensive Guide

- How the Advanced Instructions Layout Configuration Transforms Journey Task Notes in Oracle HCM 25C

- How to Create a Job Offer in Oracle Recruiting Cloud 25C Redwood Experience

- How to Configure AI Agent Task Type for Guided Journeys in Oracle HCM Cloud

- Oracle HCM Cloud Compensation Module Complete Guide

- PeopleSoft HCM Consultant

- Associate Oracle APEX Developer

- Webinar- PeopleSoft HCM PUM 53: Discover the New Landing Page and Dashboard Enhancements

- Oracle Visual Builder (VBCS) & Integration Cloud (OIC) Consultant

- Webinar: Oracle India Cloud Payroll | Automating Compliance and Precision in Payroll Processing

- Oracle HCM Cloud Consultant – UK

- How to Use HCM Data Loader for Responsibility Templates in Oracle HCM Cloud

- Streamlining Academic HR Oracle HCM in Action

- Associate Oracle DBA / PeopleSoft Administrator

- PeopleSoft Administrator / Oracle DBA

- Why the Logistics Sector Is Embracing Oracle Cloud HCM for Workforce Agility

- How Oracle HCM Enables Smarter Talent Risk Management for Financial Firms

- 5 HCM Challenges in Logistics Solved with Oracle’s Automation

- Newsletter – Oct 2025

- How Oracle Cloud HCM Helps Logistics Firms Manage Multi-Shift Operations

- Oracle Cloud HCM 25D Release – Update

- How to Implement Queue Prioritization in PeopleSoft Integration Broker

- 8 Ways Oracle HCM Simplifies Hotel Staff Scheduling & Compliance

- Senior UI/UX Designer

- Senior Node.js Developer

- Diwali Celebration 2025

- Ayudha Pooja 2025

- Onam Celebration 2025

- Enterprise Service Automation in PeopleSoft FSCM Update 54

- Implementing Audit Triggers and Audit Jobs in PeopleSoft

- PeopleSoft FSCM Image 54: Introducing Fluid Workcenters

- The Future of HR with AI

- Content Reference Administration in PeopleTools 8.62

- How to Create Custom Pages Using Fragments in Oracle Visual Builder Studio (VBS)

- The Future of HR with AI – V1

- The Future of HR with AI

- UKOUG Conference DISCOVER 2025

- The Future of HR with AI

- Work Management Framework in PeopleSoft FSCM PUM 53

- PeopleSoft FSCM PUM 53 – Enterprise Service Automation (ESA) Features

- The Future of HR with AI

- SMS Notifications in PeopleTools 8.62: Phone Number Verification Deep Dive

- How to Create Recruitment Campaigns Using Oracle Fusion Cloud Recruiting 25C Redwood Experience

- Newsletter – Sep 2025

- PeopleSoft FSCM Image 53: Requester Dashboard & Approval Statuses

- How Resorts Use Oracle Cloud HCM to Deliver 5-Star Employee Experiences

- Exploring the Redwood Experience – HCM Data Loader in Oracle Cloud

- Why CFOs Are Advocating Oracle HCM for HR and Payroll Accuracy

- How Oracle HCM Simplifies Multi-Property Hotel Workforce Management

- Navigating Oracle HCM Quarterly Updates: What HR & IT Teams Must Do

- 5 Ways Oracle Cloud HCM Modernizes HR for Universities & Institutions

- Oracle HCM in Hospitality: From Housekeeping to HR Excellence

- Oracle Redwood Migration Timeline and Testing Guide

- Discover What’s New in the Oracle Cloud Redwood 25C Release

- Oracle HCM Cloud Techno-Functional Consultant – Bangalore

- Webinar: The Future is Redwood – Transforming Recruiting with Oracle

- Oracle Redwood Experience: How to Create Job Offers in Oracle Recruiting Cloud

- 7 Benefits of Oracle HCM for Multi-National Finance Organizations

- How to Use Nomination Awards in Oracle Fusion HCM 25C to Boost Employee Engagement and Workplace Culture

- Product Marketing Manager

- Simplifying Hiring with the Recruiting Activity Center in Oracle Recruiting Cloud

- Multi-Select Goal Sharing for Managers in Oracle HCM Cloud

- Senior Sales Executive – Sportzia

- How Healthcare HR Teams Improve Compliance with Oracle Cloud HCM

- Why Retailers Are Adopting Oracle Cloud HCM for Store-Level Workforce Efficiency

- Top 5 Oracle HCM Features for Managing Hourly & Seasonal Retail Staff

- 6 Ways Oracle HCM Helps Combat Burnout in Healthcare Staff

- 6 Ways Oracle HCM Enhances Store Manager Productivity

- 7 Oracle HCM Benefits for Managing Unionized or Skilled Workers

- How to Enable Flexible Reporting in Oracle Redwood Dashboards with OTBI

- Newsletter – Aug 2025

- Kovai MarathON 2025 – 5th Edition

- Top 7 Oracle HCM Solutions for Nurse Scheduling & Credentialing

- How to Assign Goals to Multiple Employees in Oracle Fusion HCM

- Oracle Redwood Experience: New and Improved Filters on Candidate List Pages

- Oracle Fusion Webinar: Streamline Supplier Invoice to Payment with Best Practices

- 5 HR Challenges in Healthcare and How Oracle Cloud HCM Solves Them

- 7 Oracle HCM Reports You Should Be Using to Make Strategic HR Decisions

- How Oracle Cloud HCM Helps HR Teams Reduce Employee Turnover

- 5 Oracle Cloud HCM Features That Ensure HR Compliance in Banking

- How Oracle Cloud HCM Bridges the Skills Gap in Manufacturing

- From Warehouse to Checkout: Streamline Retail HR with Oracle HCM

- 8 Ways Oracle HCM Delivers ROI Beyond Payroll

- Why Fast-Growing Companies Prefer Oracle Cloud HCM Over Legacy Systems

- 10 Reasons HR Leaders Are Switching to Oracle Cloud HCM in 2025

- 5 Oracle HCM Features Every Retailer Needs for Seasonal Hiring Success

- 7 Ways Oracle Cloud HCM Is Revolutionizing Healthcare HR in 2025

- 6 Reasons Logistics Leaders are Choosing Oracle HCM for Shift Planning

- 5 Oracle Cloud HCM Benefits for Universities Managing Faculty & Staff

- Top 5 Reasons Financial Firms Choose Oracle HCM for Talent Compliance

- 8 Ways Oracle Cloud HCM Simplifies Workforce Management in Hospitality

- Admin Executive

- Enhance Hiring with AI Candidate Matching in Oracle Recruiting Cloud

- Enhanced Candidate Duplicate Check in Oracle Redwood | VBS Upgrade

- PeopleSoft Compare Report Navigation Enhanced in PeopleTools 8.62

- PeopleSoft Campus Solutions E-Verification Module for Student Background Check

- Redwood Download Salaries in Oracle HCM 25B

- Newsletter – Jul 2025

- Streamline Compensation Tasks with New Save Feature in Redwood HCM

- Data Scientist

- How Retailers Manage Holiday Workforce Pressure with Oracle Cloud HCM

- PeopleSoft HCM PUM 52: Real-Time Feedback with Automated Questionnaires

- Oracle Cloud HCM 25C Release – Update

- PeopleSoft Administrator

- Junior Project Manager

- How Oracle Fusion Cloud HCM Helps Healthcare Organizations Overcome Workforce Shortages

- Oracle Cloud HCM 24C Release – Update

- Oracle Cloud HCM 24B Release – Update

- Oracle Cloud HCM 24A Release: Updates

- Sportzia Logo Launched – A Bold Identity for the Ultimate Sports Platform

- Top Gun – Oracle HCM Cloud

- Configure Quick-Fill and Admin Access for Time and Labor in PeopleSoft HCM PUM 52

- Limit Attachments in HCM – PeopleSoft PUM 52 Update

- Kovaion Inaugurates Global Capability Centre in Coimbatore

- How to Create a Search Category for Insights in PeopleTools 8.61?

- Embedding Insights in Fluid Pages in PeopleSoft 8.61

- Newsletter – Jun 2025

- Creating a Validation for DFF Fields in Document of Record Using Visual Builder Studio – Oracle HCM Cloud

- Noncatalog Course Request in Oracle Learning Cloud

- Instructor-Led Training in Oracle Learning Cloud – A Comprehensive Guide

- Oracle Cloud HCM 24D Release: Key Updates

- Webinar: Workforce Shift Scheduling with Oracle HCM

- Oracle Cloud HCM 25A Release – New Features, Benefits & What’s Changed

- Third-Party API Integration in PeopleSoft – Enhance Application Functionality & Connectivity

- Sales Executive

- Fluid Performance Notes in PeopleSoft HCM PUM 52

- Campus Community Emergency Contacts Enhancements – PeopleSoft CS PUM 33

- Looking for an Oracle HCM Consultant?

- Top Consulting Companies for Digital Transformation Services

- Webinar on Creating Redwood UI Forms with REST API Integration and Smart Action Chains

- How Oracle Fusion HCM Analytics Revolutionizes Human Capital Management?

- Exploring Oracle Cloud Redwood 25B Release

- What’s New in Oracle Cloud HCM 25B? Key Features & Redwood Enhancements Explained

- Automated Rejection of Job Requisitions after a Defined Time Period

- Oracle Recruiting Cloud Implementation Guide

- Oracle HCM Employee Self Service

- Exploring PeopleSoft Employee Self Service

- Peoplesoft Upgrade Strategy

- PeopleSoft on Oracle Cloud

- Peoplesoft HCM Image 25 Highlights

- Employee Snapshot – A Concise preview for Managers

- PeopleSoft Mobile – Native App with no PeopleSoft customization

- Personal Analytic Notifications – PeopleTools 8.57

- PeopleTools 8.54: PeopleSoft Fluid UI

- PeopleSoft Innovator 2022: BITS Pilani & Kovaion

- Position Management > Enhancements in PUM 29

- Oracle ERP Cloud – 22A Update

- Job Data Modernization: Maintain Comments on Data Changes

- PeopleSoft Pivot Grid

- PeopleTools 8.62 – Interactive Embedded Insights & Print to PDF

- Newsletter – May 2025

- A Step deeper into the Notification Features – PeopleTools 8.59

- Bulk Approval made Easy through BPM Worklist

- PeopleTools 8.55- Pivot Grid Enhancement

- Employee Skills Identification & Skill Gap Analysis

- PeopleSoft FSCM 44 – Enhanced Corporate Card Data

- Bulk Import of Lookup Types/Codes Using File Based Loader in HCM Cloud

- PeopleSoft Workcenters

- PeopleTools Custom Branding

- PeopleSoft Configurable Drop Zone – Will it help in reducing the Customizations?

- Empower business users with custom ESS jobs in HCM cloud

- Dependent Document Upload & Approval | PUM41

- AI / ML with Agri-food Industries : Starting from Sweetest Place

- Page and Field Configurator

- Peoplesoft 8.62 – Simplified Global Search

- Understanding Oracle HCM Cloud HR Helpdesk

- Top Benefits Of ERP Systems

- Exploring Oracle ERP Modules

- How Staffing and System Management Transform with Oracle Cloud HCM Migration from PeopleSoft

- What is Procurement?

- How Oracle AI Agents Are Transforming the Employee Experience for HR Leaders

- Implementing KovaionAI Help Desk: Before and After Metrics that Matter

- 7 Mistakes to Avoid During Oracle HCM Implementations

- Implementing Generative AI Features in Oracle Cloud HCM

- Designing Scalable Mobile Banking Apps with KovaionAI Builder Platform

- Oracle Database Administrator

- PeopleSoft Administrator

- Oracle Business Development Manager – UK

- Download Absence Request History to Excel – PeopleSoft PUM 51

- Change Tracking Notifications in PFC – PeopleSoft HCM PUM 51

- Filtering Enhancements for Benefits Statements in PeopleSoft HCM PUM Image 50

- Market Research Intern

- Content Writer – Intern

- Asset Lifecycle Management Features – PeopleSoft FSCM PUM 52

- The GCC Leadership Conclave 2025 – Riding the Wave of Innovation and Talent

- Women’s Day Celebration 2025

- Valentine’s Day Celebration 2025

- Harvest Festival Celebration

- International Men’s Day Celebration 2024

- Kovai Elites Marathon 2025

- Christmas Day

- Technical Training Manager

- Soft Skill Trainer

- Senior Graphic Designer

- Head of Digital Marketing

- My Activity Center Demystified – Features and Customization in Oracle Fusion HCM

- Hosting Hiring Events with Oracle Recruiting Cloud

- AI Assist for Job Requisition Posting Descriptions

- TMB’s Oracle HCM Cloud Transformation with Kovaion

- People & Leadership Excellence Conference 2025: Insights, Innovation, and a Win for Kovaion

- How to Implement Role-Based Access Control (RBAC) in Banking Apps with KovaionAI

- Top 10 Use Cases of KovaionAI in Low-Code Development for Enterprises

- Top 7 Low-Code Development Trends You Can’t Ignore in 2025

- How Generative AI is Changing the Future of Low-Code Platforms

- Low-Code for SaaS: How Startups are Disrupting Markets in 2025

- How KovaionAI’s Workflow Engine Streamlines Complex Business Processes

- Data Analyst Intern

- MERN Stack Intern

- Top 10 Features of KovaionAI’s AI-Driven Low-Code Platform in 2025

- Top 10 AI-Powered Low-Code Platforms Revolutionizing Development in 2025

- PeopleSoft Forms and Approval Builder

- PeopleSoft Enhanced Feature in Reporting

- PeopleSoft PUM 42 Feature – Control Dependent Data via Configuration

- PeopleSoft at UKOUG Applications Conference & Exhibition 2017 #ukoug_apps17 #PeopleSoft

- Fluid Attachments Framework in PeopleSoft

- Data Population in HCM by Parsing Attachments

- HCM Cloud Time Capture | Relevance to the current Hybrid Mode

- Have you tried PeopleSoft Chatbot yet?

- Webinar | Advanced Workflow Features for Claim Management

- Newsletter – April 2025

- PeopleTools 8.62 – Smarter, Simpler PeopleSoft Now Available

- Why KovaionAI is the Best AI-Powered Employee Time Tracking Software for Businesses

- Why KovaionAI is the Ultimate AI-Driven Low-Code Platform for 2025

- Junior Accounts Executive

- How KovaionAI’s AI-Powered Chatbot Enhances Customer Engagement

- Handling Data Transformation and Validation with APIs in Low-Code Platforms

- How KovaionAI Optimizes API Management for Enterprise Applications

- Seamless Data Integration with KovaionAI’s Intelligent Data Pipeline

- Webinar – Simplifying Expenses with Oracle Digital Assistant (ODA)

- Why KovaionAI is the Ultimate CRM Solution for 2025

- Setup and Usage of the Pipeline Requisition

- Transforming Talent Acquisition with Oracle Recruiting Booster

- PeopleSoft Payable Time Dashboards – Revolutionizing Time and Labor Analytics

- Embedding Insights in Fluid Workcenter in PeopleSoft 8.61

- How KovaionAI Automates IT Support with Intelligent Service Management

- Managing Remote Work Eligibility at Job Code and Position Level

- Rapid Calculation in Global Payroll – PeopleSoft HCM Image 51

- Webinar | Simplified Claim Management Using AI Agents

- PeopleSoft Supply Chain Management – All You Need to Know

- Unlocking PeopleSoft Reporting: Essential Tools & Strategies

- Pros and Cons of PeopleSoft – A Balanced Overview

- How KovaionAI Integrates Digital Signature Features for Effortless Transactions

- Oracle HCM Cloud Goal and Performance Management

- Oracle HCM Cloud Mobile App – Comprehensive Guide

- Oracle HCM Cloud Security

- Moving from PeopleSoft to Oracle Cloud HCM? The Business Case Explained

- Top 10 Features of KovaionAI’s Knowledge Base Tool for Business

- Top Reasons Why KovaionAI is the Best Low-Code Platform for Automation

- 10 Ways a Workflow Management System Can Accelerate Your Business Growth in 2025

- Maximizing Customer Insights with KovaionAI’s AI-Powered CRM Solutions

- Oracle HCM Cloud Profile Management – Features, Benefits, and Best Practices

- Top 12 Customer Support Desk Software Every Startup Needs in 2025

- Time Reporters Waiving Meal Breaks – PeopleSoft HCM Update Image 50

- PeopleSoft PeopleTools 8.61 New Features Overview

- Oracle Extends Premier Support for PeopleSoft Through 2036

- 10 Key Features to Look for in an Enterprise-Grade Low-Code Platform

- The 20 Best Help Desk Software in 2025

- Kovaion Offers Oracle Cloud Success Navigator – Service

- Building Mobile-First Applications with KovaionAI’s Cross-Platform Framework

- Building Real-Time Analytics Dashboards with KovaionAI in Under a Day

- Newsletter – March 2025

- U.S Wages Statement Tax Data Section Update – PeopleSoft HCM Update Image 51

- Enhancing ePerformance with Instructions at Step level and Section Level

- PeopleSoft FSCM Update Image 52 – Simplifying Workflows with Enterprise Service Automation Features

- End-to-End Testing Strategies for KovaionAI-Built Applications

- How KovaionAI’s API Gateway Enables Seamless Third-Party Integration

- Webinar – Embedding Insights in Fluid Workcenter’s in PeopleSoft

- Delivering Timely Updates and Opportunities with HCM Communicate

- How KovaionAI Enables Agile Methodology in Enterprise Software Development

- The Technical Foundation Behind KovaionAI’s Enterprise-Grade Security

- Managing Supplier Profile Change Requests in Oracle Fusion

- Webinar | Empower Your Business with AI-Driven Solutions from Kovaion AI

- Newsletter – February 2025

- Webinar on Travel Authorization and Expense Management – PeopleSoft FSCM PUM 50

- Webinar – Employee Joining Dashboard in Peoplesoft Fluid

- Low Code for Small Business

- Webinar | Design Your Custom Application with Powerful Kovaion AI Platform

- Webinar | Create a Cutting-Edge eCommerce Management Application

- Webinar – Streamlining HR with E-Signatures & Redwood Journeys in Oracle HCM

- Newsletter – January 2025

- Low Code for MobileApps

- Low Code for Startups

- Boost Your Cloud with Kovaion’s Oracle Cloud Infrastructure (OCI) Services

- Craft an Expense Management Application in Less than 30 Minutes

- Webinar – Key Enhancements in “Performance Management”

- Modern Solutions for Retail and Wholesale

- Enhanced Learning Recommendations: Audience Selection & Streamlined Workflow – Quickread

- Workforce Modeling – Predict, Plan, and Optimise with Oracle HCM – Webinar

- Oracle Cloud Infrastructure (OCI) Services

- Oracle DBA Services

- Digital Transformation Services and Solutions

- Newsletter – December 2024

- Webinar | Automate Employee Management in Just 30 Minutes

- Unlocking the Power of Oracle HCM Help Desk

- Page Customization with Oracle HCM Fusion Visual Builder Tool

- Webinar | GENAI-Powered App Builder Platform Capabilities

- Webinar: How to Create Help Desk Management Software using KovaionAI

- PeopleSoft India SIG 2025 – Oracle Partner – Kovaion

- Newsletter – November 2024

- Oracle HCM Communicate with 24B update – Mastering Targeted Communications

- Webinar | Key Takeaways from the Bengaluru Tech Summit (BTS) Event

- Creating an HDL File through OIC Integration | Quickreads

- Diwali Celebration 2024

- Webinar on Oracle Grow | Empowering Employee Development and Internal Mobility

- Webinar | Exciting Features to be Unveiled for Kovaion App Builder at BTS Event

- Newsletter – October 2024

- Webinar | Advanced Workflow Automation using Kovaion App Builder Platform

- Webinar | Key Highlights of ‘PeopleTools 8.61 – Security’

- Newsletter – September 2024

- Oracle Redwood Implementation

- Oracle ERP Cloud | Asset Management | QuickRead

- Custom API Development using App Builder Platform | Low-Code Platform Webinar

- Customizing Applications with Redwood Visual Builder

- Team Management with Oracle Redwood Experience: The Team Activity Center

- Onam Celebration-2024

- Kovai MarathON – 2nd Edition

- Webinar on Talent and Succession Plan in PeopleSoft

- Newsletter – August 2024

- The Power of Redwood & GenAI in Oracle HCM Cloud | Webinar

- Unlocking Enterprise Potential | Leveraging LLMs with RAG and Vector Databases | Webinar

- Newsletter – July 2024

- Webinar | PeopleSoft HCM PUM 49 | Configured Time Summary in Fluid Timesheet

- Webinar | Why You Need an Enterprise Knowledge Management Platform

- Music Day Celebration

- Blood Donation Day

- Kovai Marathon’s – Family Run

- Women’s Day Celebration

- Republic Day Celebration

- Pongal Celebration

- New Year Celebration

- Revolutionizing HR with GenAI | In-Person Event at Oracle Tech Hub

- Newsletter – June 2024

- Webinar | Highlights of PeopleSoft HCM PUM 49 | Recruiting Enhancements & Insights Dashboard for PFC

- Webinar | Business Process Automation Using Low-Code Platform

- Small Business App Development Platform | Low-Code/No-Code

- Newsletter – May 2024

- Webinar | PeopleSoft HCM PUM 48 | Fluid Leave Donation

- Webinar | Creating a reliable app builder platform using AWS EKS

- App Builder

- Newsletter – April 2024

- Oracle Fusion Cloud HCM | Generative AI Feature | Performance Review

- Oracle HCM Cloud | How to Add a Location to Manage Calendar Event

- Webinar | Key Highlights of Oracle CloudWorld Tour 2024

- Webinar | Gen AI Use Cases in App Builder Platform

- Newsletter – March 2024

- Delving into the Highlights of PeopleSoft India SIG 2024

- Oracle Integration Cloud | Conditional Loops

- Oracle HCM Cloud | Display Scrolling Text in the Dashboard

- Oracle HCM Cloud | Touchpoints

- Low code for Insurance

- PeopleSoft India SIG 2024

- Bulk Learn Withdrawal | Oracle Learning Cloud Module | Oracle HCM Cloud

- Oracle CloudWorld Tour Mumbai – 2024

- Absence Application Page | Hide or Show the Save & Close feature

- Kovaion’s Biryani Feast and Welcome Kit

- Oracle Journeys | An Enhancer to Employee Care Package

- Creating and Configuring Customer details in Receivables

- AI/ML

- Oracle ERP Cloud

- Enabling Salary History Data in Employee Info | 23A Feature | Oracle HCM Cloud

- Low Code Platform

- Generation of QR Codes | Oracle BI Report

- Oracle Fusion Workforce Predictions

- Customizations on Learning Landing Page | Oracle Learning Cloud

- Oracle HCM Cloud

- Alternate Email for Employees during Job Application | ORC | QuickRead

- AP Payments Management | Oracle ERP Cloud | QuickReads

- Informative Power BI Enabled Dashboards | Immigration Practice Dashboard

- Upcoming Events

- Data Archiving

- PeopleSoft

- Template 02

- How KovaionAI’s AI Engine Automates Complex Business Decision Processes

- Senior Accounts Executive

- Corporate Social Media Platform Using Kovaion’s Low-Code Solution

- Low-Code Platform for Manufacturing Industries

- Past Events

- Bangalore Office Opening

- PeopleSoft

- Data Masking

- Oracle HCM Cloud

- Principal Engineer/Engineering Manager

- Streamlining US Federal HR Personnel Actions – PeopleSoft HCM Update Image 50

- Alternative to Appian

- Alternative to Retool

- Manufacturing Workflow Simplified with AI App Builder

- AI-Powered App Builder for Retail Industry

- Alternative to Airtable

- Alternative to Thunkable

- Alternative to AppSheet

- Alternative to Outsystems

- Alternative to Power Apps

- Alternative to Mendix

- Alternative to Kissflow

- Enterprise App Development using Kovaion App Builder | Webinar

- Alternative to Pega

- Onam Celebration

- Oracle HCM

- Document Management System

- Oracle ERP Cloud

- Front End Developer

- Peoplesoft HCM Update Image 30 – Raise Document Request for Students to Request Grade Cards

- Webinar | Build Your Apps Powered with Gen AI

- Alternative to Zoho Creator

- Kovai MarathON: Believe In Yourself

- Oracle ERP

- WhatsApp Intelligent Platform

- Oracle Taleo

- AI/ML Agents Developer

- Technical Overview: KovaionAI’s Data Transformation Pipeline

- Oracle CloudWorld Tour Mumbai

- Independence Day Celebration

- Knowledge-Based Portal

- Taleo

- AI & ML Services

- Oracle Integration Cloud Consultant

- PeopleSoft Enterprise CS Student Records Version 9.2 – Student Program Plan Overview

- PeopleSoft India SIG 2024

- WhatsApp Marketing

- Mother’s Day Celebration

- HR Recruitment

- Data Analytics

- About Company

- Head of Business Development

- Oracle Recruiting: Simplifying Candidate Communication and Bulk Messaging

- Webinar | PeopleSoft HCM PUM 47 | Notification Composer

- International Yoga Day

- Business Events

- Automation (RPA)

- Event UK

- Create Survey Manager Experience with Oracle Core HR

- Oracle HCM Cloud Consultant

- Webinar | Unveiling the latest features of 23D – Oracle HCM Cloud

- Tamil New Year Celebration

- Application Development

- General

- The Future of HR with AI

- Oracle Cloud Success Navigator to Maximize Oracle Cloud Journey

- Oracle SCM Cloud Consultant

- Webinar | Mastering Low-Code for Rapid Application Development

- Data Analytics

- Women’s Day Celebration

- Unlocking Innovation with Low-Code AI Platforms

- Customer Success Manager/Solution Architect – Noida

- Oracle Peoplesoft

- Webinar | Exploring Performance Management in HCM PUM 45/46

- Pre Pongal Celebration

- AI & ML

- Best 15 Benefits of AI in Banking

- Marketing Intern

- Webinar | Customize Apps in Retail Business | Your Idea Your App

- Kovaion’s Christmas Day Celebration

- Oracle HCM Cloud Onboarding – Complete Guide

- Oracle HCM Cloud Payroll Consultant

- Webinar | Oracle P2P: Significance in Business Operations & their Stages

- Kovaion Day | 14th Year

- Home

- Top 15 Advantages of AI in Business 2025

- Head of Strategic Sales

- Data to Dashboard: Low Code Integration & Analytics Brilliance | Webinar

- Men’s Day Celebration

- Leadership Team

- Absence Management in Oracle HCM Cloud

- Oracle Business Development Manager – UK

- Webinar | Remote Worker & Lockdown Framework in PeopleSoft HCM Update Image 46

- Halloween Day Celebration

- Culture

- Talent Management in Oracle HCM Cloud – Complete Guide

- Quality Engineering Lead

- Webinar | PeopleSoft HCM Update Image 46 | PUM Highlights

- Diwali Celebration

- Career

- How KovaionAI’s Drag-and-Drop Interface Accelerates Enterprise App Development

- PeopleSoft Campus Solution Consultant

- App Development Made Easy: Achieve 5X Savings in Time & Cost | Webinar

- Independence Day Celebration

- Contact Us

- Global Human Resources (HR) in Oracle HCM Cloud

- Oracle Fusion HCM Reporting & Analytics Developer

- Oracle ME: Employee Engagement & Retention Made Easier | Webinar

- World’s Chocolate Day

- Privacy Policy

- Oracle Strategic Workforce Planning – Complete Guide

- Head of People & Culture

- Simplify App Development with Low Code Platform | Webinar

- Coimbatore Office Opening

- CSR

- AI-Powered Decision-Making Transforming Retail Operations

- Product Manager

- Webinar | Self-Service Absence Management | PeopleSoft PUM Image 45

- Care for PeopleSoft

- 10 Benefits of Artificial Intelligence in Healthcare | KovaionAI

- Technical Product Manager for App Builder Platform

- Quick Reads

- Webinar | Enhancing Employee Learning using Oracle Learning Cloud

- 5 Ways KovaionAI is Revolutionizing Fintech Application Development

- Junior Oracle HCM Cloud Consultant

- Blog

- Webinar | PeopleSoft PUM 43/44 | Exploring Self Service Functionalities

- 6 Mobile App Development Platforms with Advanced AI Capabilities

- PeopleSoft Administrator

- Identify & Engage on Employee Attrition | Kovaion Biz Intelligent Tool

- Low Code App Development Platform

- PeopleSoft Asset Management – Complete Guide

- PeopleSoft Campus Solution Consultant Job Opening | Kovaion

- Zero-Code App Builder

- PeopleSoft India SIG 2023

- Best Oracle ERP Consulting Firms and Implementation Experts

- Junior Oracle DBA

- No-Code App Builder

- Modern Day Business Integration with Wootz

- PeopleSoft Enterprise Performance Management (EPM) – Comprehensive Overview

- Project Manager

- Newsletter

- Experience the excellence – Deploy PeopleSoft Chatbots

- Filter Action Reasons by User Roles on Redwood Pages

- Oracle HCM Cloud

- Business Development Lead

- Modern way of handling employee data – JOB data Modernization

- Streamlining Approval Rule Migration in Oracle Fusion HCM with SOA Composer

- KovaionAI

- Customer Success Manager

- Automate Data Masking with Oracle HCM Cloud Extensions

- 7 Cloud-Based Integration Platforms that Save Hours of Development Time

- PeopleSoft Junior Associate

- Workplace Safety First – Track Incidents and Illness effectively using PeopleSoft

- Creating Multiple Templates in a Single RTF File

- Explore the new Functionalities of 21 A release of Oracle HCM Cloud

- Peoplesoft DBA

- How AI is Shaping the Future of Multi-Language Customer Support

- Oracle Database Administrator

- Digitally Engage with Employees to re-design the career strategy

- 5 Enterprise Solutions you can Build in Hours with KovaionAI Builder

- Business Development Lead

- Experience the Modernizations delivered in 22A HCM Cloud

- How No-Code AI is Revolutionizing the Development Process

- Oracle HCM Associate Consultant

- Leverage the Next-Gen Solution to Find RIGHT TALENT – Oracle Recruiting Cloud

- How Generative AI is Transforming Software Testing: A Complete Guide

- Top Gun – Oracle DBA

- Interlink of Oracle Time and Labor & Oracle Project Costing – ‘How’ and ‘Where’ of the data flow?

- Recalculate Rate Component and Salary Details Using Run Rates-based Salary Process – Oracle HCM Cloud

- PeopleSoft Techno Functional Consultant – Noida

- A Holistic view of Teams’ availability – PeopleSoft Team Calendar (HCM PUM 42)

- Insights into Graphical Representation in Oracle Data Intelligence

- Junior Oracle HCM Cloud Consultant

- MODERNIZE your PeopleSoft and SAVE upto 38% with OCI

- Top 8 Data Visualization Platforms for Business Intelligence in 2025

- Senior Full Stack Developer

- Oracle HCM Cloud Absence Management – “Value-Added” features to Offer in 22B/C

- Oracle ERP Cloud Implementation Lead

- Leverage the latest of PeopleSoft FSCM – PUM 43/44

- PeopleSoft Payroll: Ultimate Solution for Streamlined Payroll

- Senior Business Development Executive

- Digitalize the Workforce Appraisal

- PeopleSoft Customer Relationship Management (CRM) – Ultimate Guide

- PeopleSoft Global Payroll Consultant

- Automate Employee Performance with Oracle HCM Cloud

- Top 10 AI-Powered CRM Solutions Reshaping Customer Experience

- Peoplesoft Top Gun

- Best Oracle HCM Cloud 22D Webinar | For Free

- PeopleSoft vs. Workday – A Comprehensive ERP Comparison

- Embarking on Journeys with HCM Cloud Checklist | Free Webinar

- Oracle ERP Consultant

- The Beginner’s Guide to Choosing the Right Low-Code Platform

- Unlocking Autocomplete Rules in HCM Cloud

- Oracle HCM Senior Consultant

- Why Oracle Continues to Invest in PeopleSoft – Key Reasons Explained

- Engage your customers with Kovaion’s Intelligent WhatsApp Communication

- PeopleSoft Supply Chain Management – Expert Guide

- HRIS vs. HRMS vs. HCM: Key Differences Explained

- Optimizing Human Capital with PeopleSoft HCM Solutions

- Top 12 No-Code Website Builders that will Dominate the Market in 2025

- Top 9 Industries Benefiting from Low-Code App Builders

- Top 15 AI Tools in HR in 2025

- Top 15 AI Analytics Tools to Use in 2025

- Top 8 Workflow Automation Tools for Small Businesses in 2025

- Top 10 Ticketing Tools for Streamlining IT Support in 2025

- Employee Joining Dashboard – Navigation Collection

- Top 7 Low-Code Platforms for Building Enterprise-Grade Applications in 2025

- 10 Emerging No-Code Platforms to Watch in 2025 for Smarter App Development

- PeopleSoft Insights – Empowering Position Management with HCM PUM 48

- Oracle CloudWorld Tour 2025 – Mumbai: A Glimpse into the Future of AI & Cloud

- AI Meets Customer Engagement: Personalized Support like Never Before

- How AI is Shaping the Future of Multi-Channel Helpdesk Solutions

- 7 Essential Tips for Implementing Generative AI in Business

- Exploring the Role of WhatsApp Smartflow in Automating Customer Service

- PeopleSoft HCM Update Image 50 – Pre-Boarding: Enabling Employees to Complete Tasks Before Joining

- Manufacturing Workflow Simplified with KovaionAI App Builder

- Top 5 AI-Powered Helpdesk Software for Businesses to Boost Efficiency in 2025

- How KovaionAI Builder Transforms Banking Operations with Intelligent Automation

- Why Low-Code and AI are the Perfect Match for Modernizing IT Infrastructure

- Best 7 Procurement Management Software

- 7 Top-Rated E-commerce Mobile App Builders

- Top 15 IT Asset Management Software

- Top 10 Ways Oracle HCM Cloud Drives Digital Transformation in HR

- Top 9 HR Automation Tools in 2025

- How to Choose the Best Oracle PeopleSoft Consulting Firm

- How to Choose an Oracle HCM Implementation Partner

- How AI in Banking is Shaping Customer Experiences

- PeopleSoft vs. Oracle HCM Cloud – What’s the Right Choice for Your Business?

- How to Effectively Integrate Payroll with Your Oracle HCM System

- PeopleSoft Campus Solutions in Higher Education Explained

- Top 10 Reasons Customers Choose Oracle Cloud HCM

- Top 9 Appian Alternatives in 2025

- PeopleSoft Migration to OCI (Oracle Cloud Infrastructure)

- Top 10 Retool Alternatives in 2025

- Top 8 Airtable Alternatives in 2025

- Streamlining Recruitment and Employee Engagement with an AI-Powered HR Hub

- PeopleSoft HCM Update Image 49 – Fluid Leave Donations on a Mobile Device

- PeopleSoft Accessibility Help: Empowering All Users with Inclusive Features

- Transform your Business with AI Workflow Automation 2025

- PeopleTools 8.61 – Fluid Prompt Page Enhancements

- Transforming Asset Lifecycle Management with Fluid Enhancements – PeopleSoft FSCM PUM 51

- Enhancements to the Expenses WorkCenter in Fluid – PeopleSoft FSCM PUM 51

- Performance Management Enhancements – PeopleSoft HCM PUM 50

- PeopleSoft HCM PUM 50 – Year-End Insights for U.S. Payroll

- PeopleSoft HCM PUM 50 – Additional Details in Job Offer

- Employee Retention Strategies – 30 Ways to Retain Top Talent

- PeopleSoft HR Helpdesk Solution Management Insights

- AI-Driven Analytics: Powering Smarter Decision-Making Across Industries

- Enhancing Customer Experience in Banking with Smartflow Integrations

- SharePoint for Business Process Automation with KovaionAI Builder Platform

- Streamline Support | How AI-Driven Help Desks Improve Customer Satisfaction

- Configuration Frameworks – Application Engine Plug-ins

- Why Low-Code Solutions Are Critical for the Future of Banking Automation

- Transforming Processes with Workflow Automation

- Compose Email and Text Messages Using AI Assist

- PEOPLESOFT HCM PUM 48 – Enhancements in Manage Job Posting Page

- Unlocking Success with Oracle Cloud Success Navigator

- 13 Ways AI Helpdesk Will Improve the Customer Experience in 2025

- 10 Best Workflow Automation Software in 2025

- Top 7 Help desk Ticketing Software for your Business

- Why Time Management Tools Are a Must-Have in HR Operations for 2025

- The Role of AI in Driving Advanced Data Management Strategies

- Employee Retention – All you need to know

- Digital Transformation in Business | Comprehensive Guide

- Digital Transformation in Utilities – Benefits, Trends, Use Cases

- Enterprise Digital Transformation: Benefits, Challenges, Steps, & Types

- Digital transformation in Finance: Benefits, Challenges, Predictions & Trends

- Digital Transformation in the Retail Industry – Examples, Impacts & Trends

- Digital Transformation in the Hospitality Industry – Impacts, Importance, Challenges Examples & More

- Digital Transformation in Education – Benefits, Strategies & Trends

- Transforming Business Processes with AI-Powered Knowledge Management Systems

- Revolutionizing Patient Care: How AI-Powered Data Management Drives Healthcare

- How AI-Powered Knowledge Hubs Are Transforming IT Workflows

- Creating Extensions for Oracle Cloud Apps using VB Studio

- How to Create an App for Your Business in 12 Easy Steps

- Report Viewing with BIP Panel Drawer Integration in Journeys – Oracle HCM Cloud 24B Update

- No-Code Automation and How it Benefits Your Business with Kovaion’s App Builder Platform

- Top 10 Software Solutions for Automating Procurement Processes

- How to Create Your Own Social Media App Using Kovaion’s Social Media App Builder

- Comprehensive Guide | Learning Management System (LMS) for Higher Education

- Oracle AI Agent and its Role – A Comprehensive Guide

- How Finance Low-Code Platforms Transform Financial Services

- Digital Transformation in Healthcare – Complete Guide

- Top 10 Learning Management Systems for Higher Education

- Enterprise Knowledge Management System – Comprehensive Guide

- How to Request Additional Documents from Candidates | Oracle HCM Cloud

- How No-Code AI Simplifies the Development Process

- Digital Transformation in Manufacturing – Comprehensive Guide

- Transform Your Business with Kovaion No-Code Software

- Top 8 No Code AI Tools

- Reasons to Select Kovaion for Your Mobile App Development

- Best 10 Document Management Software for Law Firms

- Effective Knowledge Management Systems for Small Businesses

- Top 7 No-Code Tools Every Product Manager Should Know

- AI Vector Search in Oracle Database 23ai

- Data-Driven Decision Making in HR | How AI is Transforming?

- AI is Transforming the Future of Human Resources | AI in HR

- Artificial Intelligence (AI) in Healthcare & Medical Field | Complete Guide

- Digital Transformation in Banking Industry – Comprehensive Guide

- Understanding PeopleSoft Integration Broker

- Top 8 No-Code AI App Builders in 2025

- Steps & Best Practices for a Successful Oracle ERP Cloud Implementation

- ERP Consulting | All you need to know

- Top 10 Healthcare Consulting Firms & Insights You Need to Know

- Understanding Digital Transformation Consulting – Complete Guide

- The Role of Low-Code and AI in Enhancing Educational Innovation

- The Power of Agility Through Low-Code Process Orchestration

- Maintaining Educational Details Made Easy with Kovaion’s Document Management System

- Top 9 Knowledge Management Tools for Efficient Business Operations

- The Best Peoplesoft Consulting Firms for Business Transformation

- Top 7 Key Benefits of Low-Code Platforms for Enterprise Businesses

- Streamlining Supplier Payments: Simplifying Third-Party Disbursements

- Setting Up A Rates-Based Salary Basis | Oracle HCM Cloud

- Top 9 Technical Documentation Tools for 2025

- Top 7 Thunkable Alternatives in 2025

- Top 6 AppSheet Alternatives in 2025

- PeopleSoft HCM PUM 49 | Enhancing Employee Header Display

- Top 7 GitBook Alternatives in 2025

- Best Oracle HCM Partners and Resellers

- How Oracle Cloud HCM Transforms Healthcare Workforce Management?

- Best Oracle HCM Consulting Firms for Business Growth

- Key Features of Redwood User Experience in Oracle Fusion HCM

- Top 5 Outsystems Alternatives in 2025

- Top 5 Power Apps Alternatives in 2025

- Efficient Workflow Automation with Low-Code

- AI in Mobile App Development: Transforming the Future of App Creation

- Enhancing Payroll Efficiency with ‘Okay to Pay All’ | HCM PeopleSoft Image 50

- Top 5 Mendix Alternatives in 2025

- Top 5 Kissflow Alternatives in 2025

- Configuring Manual Signatures in Oracle HCM Journeys

- Top 5 Pega Alternatives in 2025

- PeopleSoft to Oracle HCM Cloud Migration | Guide

- Best Practices for Managing Versions in Knowledge-Based Portal

- Why is Redwood important? A technical perspective

- Oracle Redwood: Use Cases Across Industries and its Future

- All You Need to Know About Oracle Redwood

- AI in Human Capital Management: Innovations and Impacts

- Drill-Down Table Visualization in Low-Code Platforms

- Ensuring Secure API Integration in SaaS Development

- Oracle HCM 24C Updates | Unlocking Redwood Features

- PeopleSoft CS Image 32 | Fluid Faculty Center Enhancement | Class Roster Page

- Developing SaaS Applications using Kovaion Low-Code Platform a Step-by-Step Guide

- Exploring Robotic Process Automation (RPA) and Low-Code Process Automation: Key Use Cases and Benefits

- PeopleSoft HCM Update Image 49 | General & Onboarding Dashboard Insights

- Step-by-Step Guide to Building a Web App with a Low-Code Platform

- 7 Strategic Benefits of Implementing DevOps in Your Organization

- Overcoming Manufacturing Challenges with Low-Code: A Comprehensive Overview

- How Low-Code Platforms are Revolutionizing Manufacturing Processes?

- Creating Templates in a Knowledge Management System (KMS)

- PeopleSoft HCM PUM 49: Enhancing Global Payroll with COBOL Trace Tool for Identification

- PeopleSoft HCM PUM 49 | Introducing the Preferred Names Functionality

- Enhance Feedback with AI Assistance in Oracle HCM Cloud

- What Platform Is Best for Building Web Apps with an Existing Database, With Limited Code?

- The Rise of Low-Code Platforms and Their Influence on SaaS

- PeopleSoft HCM Update Image 49 | Recruiting Enhancement

- PeopleSoft HCM PUM 49 | Streamlined Benefits Enrollment Usability Enhancements

- PeopleSoft HCM PUM 48 | Global Payroll & Absence Management | Element Trace Viewer

- Oracle 24A | Redwood Feature Enabled in Goal Creation Page Using AI Assistance

- How AI-Powered Low-Code Platforms Are Transforming Innovation?

- Best 5 Angular Low-Code Platform for Developers In 2025

- How Low-Code/No-Code Modernization Enhances Security and Performance

- How to Build an Enterprise App without Coding?

- What Are the Best Low-Code Platforms for Native App Development?

- Are low-code mobile app platforms only good for prototyping apps?

- Kovaion’s Low-Code Platform: Integrated with Microsoft SharePoint

- Reducing Technical Debt with Low-Code Solutions

- What Kind of Apps do you make using Low-Code/No-Code Tools?

- Best Low-Code Development Platforms for Microsoft SharePoint

- Best way to Develop a Rapid Application Development for Business Needs

- Successfully Transfer Absence Balance a After Global Transfer

- Integrating a Customized Dashboard into Oracle Benefits Self-Service

- Oracle PeopleSoft | Setting Up Alerts for Pending Approvals

- PeopleSoft FSCM 50 | Travel Authorization and Expense Management

- PeopleSoft HCM PUM 49 | Configurable Time Summary to Fluid Timesheet

- 5 Ways Low-Code Application Development Platforms Are Revolutionizing Business

- 10 Low-Code Web App Builders to Simplify Development

- Best FREE Low Code/No Code App Development Platforms

- Top 8 Low-Code Solutions for Building Enterprise Web and Desktop Apps

- Reflecting on Kovaion’s Logo Evolution | Nanda Kumar Speaks

- Top App Builder Platforms for the Education Sector

- 11 Best App Builder Platforms for Hospitality

- How to build applications without coding?

- Best 7 Zero-Code Platforms for Startups

- Top 10 Zero-Code Platforms Transforming Businesses Today

- Best 10 Zero-Code Platforms for Small Business

- Top 8 Zero-Code Platforms for Manufacturing

- What is Zero-Code Platform and Why Should You Care?

- Exploring OpenAI Integration in Low Code Platform

- Why Zero-Code App Development Platform is important?

- Why Your Business Needs Zero-Code Development?

- Understanding Zero-Code Platforms

- Customizing Page Accessibility with Redwood Theme’s Visual Builder

- Exploring PeopleSoft’s Retirement Planning Updates

- Best No-Code Platform for Manufacturing

- The Best No-Code Platform for Banking

- How Does No-Code App Development Builder Work?

- Best 7 Codeless App Builder in Retail

- Best 8 No-Code Mobile App Builders in 2025

- Crafting Engaging Tooltips: Enhancing User Interaction and Understanding

- Best Low-Code Platform for the Retail Industry

- 7 Powerful No-Code Platforms for Business Transformation

- Why Retailers Are Embracing Low-Code Platforms

- Reshape the Retail Future with Low-Code/No-Code

- The Power of Low-Code Platforms in the Retail Industry

- No-Code Form Builders for Small Businesses

- Top 10 Low-Code Platforms to Build Your Next App

- Key Reasons for Pro Developers to Adopt Low-Code

- MyWork Hub at Emids: Intuitive and Unified Platform for Talent & Culture

- Document Management System

- Generative AI vs Traditional AI

- Performance Evaluation Tracking Made Easy in Kovaion’s Low-Code Platform

- Process Automation with a Low-Code Platform | Workflow

- PeopleSoft HCM PUM 48 | Fluid Person Data Edit for Approvers

- Oracle’s Continuous Support & Innovation for PeopleSoft till 2035

- Why Startups Should Start Using Kovaion’s Low-Code Platform?

- Import of Loqate and Non-Loqate Geographies in Oracle HCM Fusion

- Unleash Performance with Redis in Kovaion’s Low-Code Applications

- The Growing Impact of Generative AI on Low-Code/No-Code Development

- What is Rapid Application Development

- Explore Jobs on Oracle Maps with Oracle Recruiting Cloud (ORC) 23D Release

- Oracle Enhances HCM Data Loader- 23C and 23D Updates

- Building a Help Desk Application with Kovaion App Builder

- 10 Ways Low-Code Platforms Can Boost Your Development Workflow

- How Kovaion’s HR Module Streamlines your Recruitment Process

- Geofencing Unleashed: Elevating Time Management in HCM Cloud Web Clocks

- The Evolution of Signature | Modernizing HR Practices | Oracle HCM Cloud

- Understanding Low-Code No-Code (LCNC) Platforms

- Comprehensive Guide to No Code Development

- Security Migrations Made Easy using Oracle HCM Cloud

- Top 8 Low-code Platform for Healthcare

- Best Low-Code/No-Code Platforms Every Developer Should Know

- 7 Best No-Code Development Platforms for Small Businesses

- Oracle 23D | Redwood Experience for Compensatory Plan Adjustments

- Best 10 No Code Platforms in SaaS

- Best Low-Code Platforms for Small Business in 2025

- Best Low-Code Platforms in SaaS

- Oracle BI Reports | Incorporating Barcodes via RTF & Administration Setup

- Enhancing Managerial Oversight with Strategic Absence Planning

- PeopleSoft HCM PUM 47 | Personal Data Modernization

- PeopleSoft HCM PUM 47 | Time and Labor Component Lockdown

- Enhancing Low-Code Forms with Third-Party API Integration

- Enhancing Wellness in Oracle HCM through Smart Watch Integration

- How to Secure eEnterprise Mobile Apps

- Magic Quadrant for Enterprise Low-code Application Platforms 2025

- Low-Code Development Platform Software with Data Mapping

- Best 5 No-Code Development Platforms in 2025

- Best 8 No-Code Development Platforms for Enterprise Businesses

- Best 9 Low-code Platforms for Startups

- Top 8 Low-code Development Platforms on a Budget to Use in 2025

- Best 7 Low-Code Dashboard Builders in 2025

- 7 Best Low-Code Mobile App Development Platforms in 2025

- Top 7 Low Code Platforms for Enterprises

- Top 7 Low-code Platforms for Banking

- Oracle ERP Cloud | Multiperiod Accounting Invoices

- PeopleSoft | PeopleTools 8.61 Key Highlights

- Triggering Notifications | Mass Allocation of Journeys via Rest API

- Future of Low-Code/No-Code App Development Platforms

- Project Cost Management: The Art of Budgeting and Expense Control

- Low-Code Platform for Automating Business Processes in the Manufacturing Industry

- Top 5 Low-Code Test Automation Tools in 2025

- The Top 7 Industries that Can Benefit from Using a Generative AI App Builder

- Generative AI vs. LLMs: What’s the Difference?

- Top 5 Zoho Creators Alternatives

- Top 7 Use Cases of Low-Code Automation Across Industries

- 5 Popular Rapid Application Development Tools in 2025

- Top 8 Best Generative AI App Builders 2025

- What is LLM and How does it Work?

- How to Build an App using Generative AI App Builder

- Revolutionizing Retail and Wholesale: Unleashing the Power of Low-Code Automation

- Citizen Developers vs. Professional Developers | The Difference

- How Low-Code Solutions Can Reduce Costs in Healthcare

- Best No-Code App Builders in 2025

- The 7 Best App Building Software for Small Businesses

- Streamlining Retail and Wholesale Operations with Low-Code Automation

- Benefits of Low Code in Manufacturing

- 9 Best Mobile App Development Tools & Software

- 4 Use Cases of Low-Code in Healthcare

- Creation of Contracts in Oracle Cloud ERP

- Comprehensive Guide to Low Code Development | All you need to know

- When Your Company Needs to Invest in Low-Code Automation?

- Low-Code/No-Code Examples & Use-Cases

- 10 Best Low-Code Development Platforms to Use in 2025

- Time and Labor and Absence Management Integration

- Oracle HCM Cloud – Expense Restricting Rate Limits in Expense

- No-Code Vs. Low-Code Vs. High Code Vs. Zero Code | Difference

- Streamlining Managerial Tasks with PeopleSoft HCM’s Direct Reports Tile

- Oracle ERP Cloud Evaluated Receipt Settlements (ERS)

- Oracle ERP Cloud Touchless Buying

- Revamped Learning Page Experience for Learners & Managers | Oracle Learn

- Leveraging Python & Oracle Functions for Serverless Excellence

- 7 Must-Have Dashboard Features for Business

- Oracle CloudWorld 2023: Discover the Latest News

- Enhancing HR Management with Power BI: A Comprehensive Analytics Report

- Revolutionizing Recruitment with AI-Powered Resume Matching Chatbot

- Navigating Data Mapping in Oracle Integration Cloud

- PeopleTools 8.59 | NavBar Menu Sorting

- Updated Workflow Transaction with Workflow Rules Report

- Peoplesoft HCM PUM Image 46 | Employee Header Configuration

- PeopleTools 8.59 and 8.60 | Process Monitor Changes

- Transaction Reversal in PeopleSoft Asset Management – Capability to Reverse Incorrect Transactions

- Fluid Compensation History – A revolutionary change in ESS | PeopleSoft

- PeopleSoft Process Flow Basics

- Self-Service Address Configuration Page | PeopleSoft

- Related Content Service Framework | PeopleSoft

- Generating Custom XMLP Reports in PeopleSoft with Desired File Names

- Oracle BI-Extract Employee Photo using Dynamic Blob

- Enhanced Payables Management with Business Unit Management | PeopleSoft FSCM PUM 46

- Generating Employment Agreement Letter Through the Checklist

- Enhanced Data Lockdown Framework | PeopleSoft HCM PUM 46

- Exploring Oracle Grow in Oracle ME

- Implementing Compensatory Type Absence

- Empower your Workforce with Effortless Learning Through Auto-Enrolment

- Kovaion has been recognized by Oracle as a “PeopleSoft Partner”

- Enabling Metrics in Requisition Overview | Oracle Fusion Recruiting Cloud

- PeopleSoft HCM Update Image 46 | PUM Highlights

- PeopleSoft HCM PI45 Absence Management through Self Service

- 5 Powerful Form Builder Features of Kovaion’s Low Code Platform

- Best Practices of UI/UX Creation for Startups

- Harnessing the Potential of the Placement Dashboard | From Campus to Career

- Creation of Manual Transaction and Billing Adjustments

- Generation of Letter from Document of Records When using HDL

- PeopleSoft HCM Update Image 45 | Additional Display Configurability for Company Directory

- Oracle Fusion HCM 23A | Push Reminders Made Easier with Oracle Nudge

- Oracle’s Extended Support for PeopleSoft to 2034

- Oracle ME | Guides, Connects, Support Employees

- PeopleSoft HCM Update Image 45 | Display Remote Worker Vaccination Visualization

- Employee Self Service – Flexible Schedule Change | PeopleSoft HCM PUM Image 44

- PeopleSoft HCM | Personalize My Team for Manager

- Test Data Generation Tool

- 10 Signs your Business Needs a Low Code Development Platform

- Oracle Cloud 22D Release Updates – Oracle Recruiting Cloud (ORC)

- Elevate Employee Experience Using Journeys | Oracle ME

- PeopleSoft FSCM Update Image 45 | Manage Receipts

- Back-to-Back Wins of PeopleSoft Innovator Award 2022 & 2023

- PeopleSoft Innovator 2023: Karnataka Bank & Kovaion

- PeopleSoft PUM Image 45 | Performance Management for Mobile Users

- Deep Dive into PeopleSoft Questionnaire Framework

- Dynamic Tile in PeopleSoft

- WhatsApp Promotional Messages | WhatsApp Marketing Messages Examples

- Oracle HCM Cloud Implementation | Absence Setup Migration

- Creation of BIP Report | Leveraging Excel Adapters | Oracle Fusion

- Billing Process of Projects with Contracts | Oracle ERP Cloud

- Automated Calendar Group Creation – PeopleSoft HCM PUM 33 Feature

- Revolutionize your Business with Mobile Responsive Evaluation Management Questionnaires

- PeopleSoft HR Notification | Announcements Made Easy

- Compute Salary components with system-driven metrics

- Operational excellence with intuitive PT 8.58 Kibana Analytics & Rapid Cloud Deployments

- Seamless Document Processing with E-Signature using Oracle HCM cloud checklist tasks

- PeopleSoft HCM PUM Image 34 | Feature Highlights & Enhancements

- PeopleSoft Test Framework (PTF) as part of PeopleTools 8.58 | New Features Overview

- Free Implementation of Workforce Health and Safety in Oracle HCM Cloud

- Onpremise PeopleTools 8.58.03 Available for Download

- Dig Deeper into Fluid Extended Absence Self Service | Oracle PeopleSoft PUM 31 feature

- PeopleSoft HCM PUM 33 | Features &Enhancements | Staying Current with PeopleSoft Updates

- What’s excitingly new in PeopleTools 8.58? – A sneak peek into PeopleTools 8.58 Highlights

- Fluid HCM Company Directory | Features & Usability improvements

- Kovaion Consulting | Corporate Profile

- Kovaion global contribution towards customer adoption by focusing on Oracle Star products

- Dynamic impact of Internet of Things (IoT) in HR

- Accelerate your interview process with Evaluation Management & Microsoft Office 365

- BI Schedule Trigger–Dynamic Job Scheduling

- Incorporate your Business with Oracle Blockchain Cloud Services

- Optimizing Evaluation Process in Taleo | Kovaion

- Artificial Intelligence in HR processes recuperating

- Enhancing recruitment & learning experience using Oracle & LinkedIn collaboration

- Enhance & Upgrade the robust Oracle HCM Cloud with R13

- My Experience with #Sangam17

- SQL Update on PSURLDEFN, Application Server and GetURL(URL.XXXX) – All connected

- Oracle OpenWorld 2014

- PeopleSoft Health and Safety – Enhancement & Modernization

- Automated Generation of Employee Letter from Document of Records

- Leverage “Fluid Discussion Service” for effectual cross team

- A decade of IT Services: Kovaion Celebrates its 10th Anniversary

- Modern & Fluid Job Data Page on PeopleSoft HCM PUM 36

- Kibana Analytics on PeopleSoft General Ledger – PeopleSoft FSCM Image 37

- A smart way to act on Performance pending notifications

- Payroll processing for “Leave of Absence” – PeopleSoft PUM 33

- Oracle HCM – Validate User inputs on-the-go using Groovy Expressions

- Handling Talent Evaluation in Global transfer with Oracle HCM Cloud

- HR Business Partner Feature – PeopleSoft HCM PUM 31

- Unified Sandbox – A Simplified approach for Customization

- Autocomplete Rules – A New tool in HCM Experience Design Studio

- Dwell Deep into Drop Zones | Extension to Classic Foundation Pages

- Audit password changes using Oracle HCM Cloud scheduled process

- PeopleSoft HCM PUM 35 Feature Highlights | Kovaion

- Avoid Customization – Mask Sensitive Data just by Configuration

- Communicate Effectively With Your Employees | Kovaion

- Accelerate PSFT HCM Transformation by managing your Customizations

- Deep Dive into SQL Query Logs to Improve BI Performance

- PeopleSoft Fluid Announcement Tile | Kovaion

- PeopleTools 8.58: Isolate Customizations to AppEngine programs via Plug-ins

- Embark Your Security Journey with Location Based Access

- Build intuitive visualizations using PeopleSoft Kibana

- Creating Job Requisition in Few Clicks-Oracle 22A update

- Auto provision Area of Responsibilities (AOR) in HCM Cloud

- One Click Solution to Handle Mass DOR Downloads In HCM Cloud

- Employee Calendar makes tasks easy – PeopleSoft HCM 42 feature

- Leverage Absence Management to handle ESS Reimbursements

- Systematize reiterating time off with the help of Oracle HCM Cloud

- Green IT – The Future Ray of Hope for Globalization Sustenance

- Remote Worker | PeopleSoft HCM Image 40

- Kovaion Day – Embarking on the 12th year

- HCM PUM 39 Kibana Analytics Overview of Payroll Costs

- HCM PUM 38 gives a nicer way to synchronize Job Skills to your Talent Profile

- Connections – A New User Experience for Directory

- PeopleSoft Intelligent Chat Assistant from Oracle (PICASO)

- Embarking on Journeys with HCM Cloud Checklists

- Peopletool 8.59 Overview

- Password verification – Leverage users to access checklist in secured way

- PeopleSoft Global Search – Step Wise Configuration

- PUM 37 Enhancement – More on Page and Field Configurator

- Dwell Deep into Drop Zones – PeopleSoft PUM 34 Feature

- Oracle HCM Survey – To align People and Business strategy

- PeopleSoft HCM PUM 43 Feature | Quick Calculation for NA payroll

- Create Minimal Career Template | Prior 23B Update

- 5 Ways to Engage Your Employees | WhatsApp Business App

- Sensitive Data Access Audit | Oracle HCM Cloud | 21B Release

- Oracle Learn – Leaderboard | All You Need to Know

- Oracle Digital Assistant | What it offers for HCM Cloud Customers

- PeopleTools 8.59 | Exploring Search & Navigation Features

- PeopleSoft PUM 43 | Absence Balance & Forecast Feature Enhancement

- Approvals on the Go

- PeopleSoft Event Mapping Framework

- PeopleSoft | Fluid Dashboards

- PeopleTools 8.56 – Classic Plus

- PeopleTools 8.55 – Navigation Collection and Tile Wizard

- PeopleSoft-Smart HR Templates

- Creating Linked Servers in SQL Server 2012 to SQLServer 2000

- Onboarding in Taleo Business Enterprise | Hiring New Employees

- Kovaion Had a Great Show #Sangam15

- Fusion HCM Data Loader | Seamless On-Premise to Cloud Transition

- Cloud Talent Management Solution | Oracle Taleo Business Edition (TBE)

- Integrated Recruiting Reports in Taleo | Analytics Made Easy

- Career Section in Taleo Business Enterprise-Smart Career Site Manager

- PeopleSoft Approval Framework | Line Level Approval

- Peoplesoft | Drill Down PS Query

- PeopleSoft Composite Query

- PeopleSoft Fluid User Interface

- SQL Update on PSURLDEFN, App Server & GetURL(URL.XXXX) – All connected

- Peoplesoft Outlook Integration

- Peoplesoft Paycheck Modeler

- PeopleSoft Interwindow Communication

- UKOUG EMEA PeopleSoft Roadshow 2013 | Event Article

- Cumulative Feature Overviews (CFO) For PeopleSoft 9.2

- Understanding Automatic Enrolment

- HMRC RTI – Oracle PeopleSoft | Kovaion

- PeopleSoft Test Framework

- All you Need to Know About Data Archival | Kovaion

AI beyond HRMS. Meet DelveAnt, our AI-native CRM for modern sales teams. Get Early Access for FREE